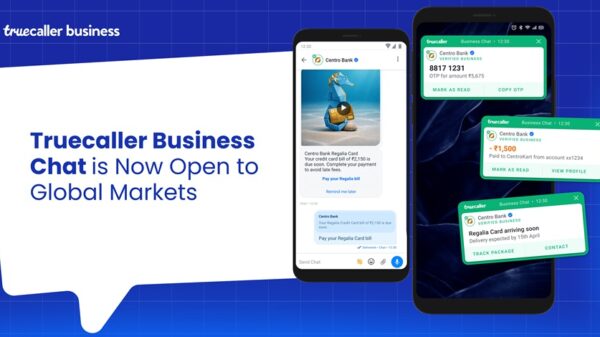

Meta Superintelligence Labs (MSL) unveiled Muse Spark Tuesday, its first large language model in a new Muse series, designed from scratch to deliver “personal superintelligence”—an AI assistant tailored to users’ real-world needs like complex reasoning in science, math and health.

A New Model: Muse Spark

Over the last nine months, Meta Superintelligence Labs rebuilt our AI stack from the ground up, moving faster than any development cycle we have run before. Muse Spark is the first model in our new Muse series — a deliberate and scientific approach to model scaling where each generation validates and builds on the last before we go bigger. This initial model is small and fast by design, yet capable enough to reason through complex questions in science, math, and health. It is a powerful foundation, and the next generation is already in development. Muse Spark now powers the Meta AI assistant in the Meta AI app and meta.ai, built to support complex reasoning and multimodal tasks.

What’s Changed with Meta AI

The Meta AI app and meta.ai are getting an upgrade today, along with a new look. Whether you need a quick answer or help with complex problems that need strong reasoning, Meta AI now handles both. You can switch between modes depending on the task, and Meta AI can launch multiple subagents in parallel to tackle your question. Like planning a family trip to Florida where one agent drafts the itinerary, another compares Orlando vs. the Keys, and a third finds kid-friendly activities — all at the same time, giving you a better answer, faster.

Ask Meta AI: It Understands

The real world moves fast, and most of it does not fit into a text box. That is why we built strong multimodal perception into Muse Spark, so Meta AI can see and understand what you are looking at, not just read what you type. Snap a photo of an airport snack shelf and Meta AI can identify and rank the snacks with the most protein — no label-squinting required. Scan a product and ask how it compares to alternatives. It is the difference between an AI that waits for you to explain the world and one that can simply look at the world with you. And when Meta AI powered by Muse Spark comes to our AI glasses, the assistant will be able to better see and understand the world around you.

Multimodal perception is especially valuable for health. With Muse Spark, Meta AI is now able to help you navigate health questions with more detailed responses, including some questions involving images and charts. Health is one of the top reasons people turn to AI, so we worked with a team of physicians to develop the model’s ability to provide helpful information on common health questions and concerns.

Muse Spark excels at visual coding, letting you create custom websites and mini-games straight from a prompt. Ask Meta AI to build a dashboard for planning a big surprise party, spin up a retro arcade game to chase a high score, or launch a whimsical flight simulator — and share any of them with friends.

Ask Meta AI: It’s Plugged Into What You Care About

Meta AI can now help you discover what to wear, how to style a room, or what to buy for someone you know. Shopping mode draws from the styling inspiration and brand storytelling already happening across our apps, surfacing ideas from the creators and communities people already follow.

And when you are looking up a place to go or a topic that is trending, Meta AI surfaces rich and relevant context right alongside the conversation. Tap into a location and see public posts from locals who know the area. Ask what people are buzzing about and get the full picture, pulled from content and community posts. It is context from your people, right where you need it.

Looking Ahead

The Meta AI app and meta.ai will have the upgraded experience with Instant and Thinking modes everywhere they are available today. The new Meta AI features are starting to roll out in the US on both. In the coming weeks, we will bring these new modes and capabilities to more countries and to the places where people use Meta AI, including Instagram, Facebook, Messenger, WhatsApp, and our AI glasses — where these perception capabilities become even more powerful. We are also opening access to the underlying technology. It will be available in private preview via API to select partners, and we hope to open-source future versions of the model.

This is only the start. As we expand these features, expect richer, more visual results, with Reels, photos, and posts woven directly into your answers, with credit back to the content creators. And as our models improve, we’ll continue to build safeguards for things like safety and privacy, starting with the strengthened risk framework and other protections we’re sharing today.

The future of Meta AI is rooted in the relationships and context already at the center of your life. We are building toward personal superintelligence — an AI that does not just answer your questions but truly understands your world because it is built on it.

![]()